VerbalScribe vs. Human Interpreters for Multilingual Events: Cost, Scalability, and Quality Compared for 2026

If you are planning a multilingual event in 2026, you are facing a question that did not exist a decade ago: should you hire a traditional team of human interpreters, invest in an AI-powered multilingual captioning platform, or combine both? Understanding multilingual event captioning cost is no longer just about comparing hourly rates. It involves evaluating scalability across languages, quality thresholds for different content types, logistical complexity, and the total cost of delivering a reliable experience to every attendee.

The short answer is that human interpreters still offer the highest quality for nuanced, high-stakes spoken interpretation, but they come with significant cost, logistical overhead, and hard limits on language coverage. AI-powered platforms like VerbalScribe dramatically reduce per-language costs, scale to dozens of languages simultaneously, and integrate directly into existing production workflows. For most professional events in 2026, a hybrid approach — using human interpreters for primary languages and AI captioning for broader multilingual coverage — delivers the strongest combination of quality, reach, and budget efficiency.

This post provides a transparent breakdown of both approaches so you can make the right decision for your event, your audience, and your budget.

The Real Cost of Human Interpreters vs. AI Multilingual Captioning in 2026

Cost is typically the first factor event planners evaluate, and it is where the gap between traditional interpretation and AI captioning has widened most significantly.

Human Interpreter Costs

Simultaneous interpretation for live events requires at least two interpreters per language pair (they rotate every 20-30 minutes to maintain accuracy). For a full-day event, you also need interpretation booths, receivers, headsets, and a technician to manage the equipment.

Here is a realistic cost breakdown for a one-day corporate conference in 2026:

Cost Category | Per Language (USD) | 5 Languages (USD) | 10 Languages (USD) |

|---|---|---|---|

Interpreter team (2 per language) | $2,500–$4,000 | $12,500–$20,000 | $25,000–$40,000 |

Interpretation booth rental | $1,200–$2,500 | $6,000–$12,500 | $12,000–$25,000 |

Receiver/headset units (200 attendees) | $800–$1,500 | $800–$1,500 | $800–$1,500 |

Equipment technician | $600–$1,200 | $600–$1,200 | $600–$1,200 |

Travel and per diem (if applicable) | $500–$2,000 | $2,500–$10,000 | $5,000–$20,000 |

Estimated total | $5,600–$11,200 | $22,400–$45,200 | $43,400–$87,700 |

These figures reflect industry averages across North American and European markets. Costs increase further for rare language pairs, specialized subject matter interpreters, or multi-day events.

AI Captioning Platform Costs

AI multilingual captioning platforms operate on a fundamentally different cost structure. There is no per-language staffing requirement, no physical booth infrastructure, and no headset distribution.

Cost Category | Typical Range (USD) |

|---|---|

Platform subscription or event license | $500–$3,000 per event |

Additional languages (beyond base) | Often included or $50–$200 per language |

Technical setup and support | Included in premium tiers |

Attendee access | Via personal device (no hardware) |

Estimated total (10+ languages, one-day event) | $1,000–$4,000 |

The multilingual event captioning cost through an AI platform can be 10 to 20 times lower than an equivalent human interpreter setup when you are covering five or more languages.

Where Cost Comparisons Get Nuanced

Raw cost comparison does not tell the full story. Human interpreters provide spoken audio output, which serves attendees who prefer listening over reading. AI captioning provides text-based output, which serves deaf and hard-of-hearing attendees, non-native speakers, and anyone in a noisy environment. These are different modalities solving overlapping but not identical needs. The right question is not which is cheaper, but which combination of approaches delivers the accessibility and inclusion your event requires at a sustainable cost.

Scalability: How Each Approach Handles More Languages

The Hard Ceiling of Human Interpretation

Every additional language you add to a human interpretation setup requires two more interpreters, another booth, and additional coordination. Beyond five or six languages, the logistics become substantial. Finding qualified simultaneous interpreters for less common languages — Tagalog, Swahili, Vietnamese, or Bengali — can be extremely difficult depending on your event location. Lead times for booking specialized interpreters can extend to months.

For events with international audiences spanning 10, 15, or 20 language groups, full human interpretation coverage is often not just expensive but operationally impractical.

How AI Captioning Scales

AI-powered platforms like VerbalScribe handle additional languages through software, not staffing. Adding a tenth language costs the same infrastructure as adding the third. This means event teams can offer 20 or more language options to attendees without proportional increases in cost, space, or coordination.

Attendees access captions on their own devices — phones, tablets, or laptops — eliminating the need to distribute and collect physical receivers. This also supports hybrid and live-streamed events seamlessly, since remote attendees get the same multilingual access as those in the room.

Scalability Comparison at a Glance

Factor | Human Interpreters | AI Captioning Platform |

|---|---|---|

Languages supported per event | Typically 2–6 | 20–50+ |

Cost increase per added language | $5,000–$11,000+ | Minimal or included |

Physical space requirements | Booth per language | None |

Remote/hybrid attendee access | Requires additional streaming setup | Built-in |

Lead time for rare languages | Weeks to months | Immediate |

Staff coordination complexity | High | Low |

Quality and Accuracy: Where Each Approach Excels

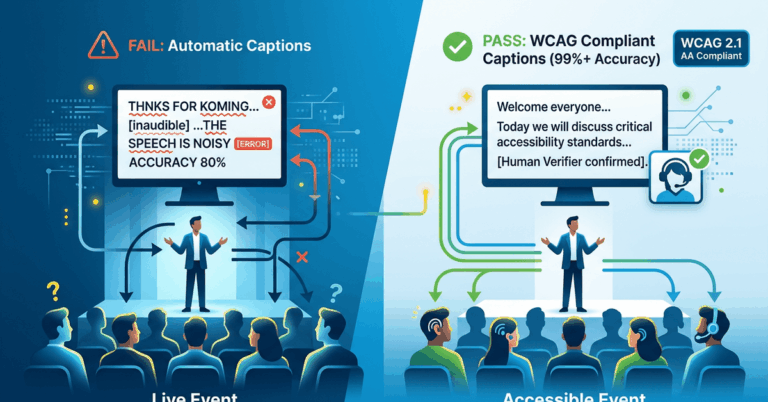

This is the area where honest evaluation matters most. AI captioning has improved dramatically, but it is not a universal replacement for human interpretation in every context.

Where Human Interpreters Lead

Human interpreters excel in situations involving high-context communication: diplomatic negotiations, legal proceedings, emotionally sensitive content, heavy use of idiom, cultural nuance, and rapid speaker changes with overlapping dialogue. A skilled simultaneous interpreter captures tone, intent, and subtext in ways that current AI models cannot fully replicate.

For keynote addresses at high-profile corporate events, or for content involving complex regulatory language, human interpreters provide a level of contextual accuracy that remains unmatched.

Where AI Captioning Performs Well

AI captioning platforms perform at a high level for structured presentations, panel discussions, lectures, training sessions, and worship services where the content follows a relatively predictable format. When speakers use clear diction, follow a logical structure, and speak at a moderate pace, AI transcription accuracy rates in primary languages consistently reach 90-95% or higher.

For multilingual output, AI translation quality has reached a point where attendees can follow content clearly and participate meaningfully. It may not capture every idiomatic subtlety, but for the purpose of comprehension and inclusion, it meets the threshold that most events require.

Quality Comparison by Event Type

Event Type | Human Interpreter Advantage | AI Captioning Advantage |

|---|---|---|

Diplomatic or legal proceedings | High | Low |

Corporate keynotes | Moderate to High | Moderate |

Multi-session conferences | Moderate | High (scalability) |

University lectures | Moderate | High |

Corporate training | Low to Moderate | High |

Worship services | Moderate | High |

Hybrid/virtual events | Low (logistically complex) | High |

Events needing 10+ languages | Not feasible for most budgets | High |

The Hybrid Model: Combining Human Interpreters with AI Captioning

For many professional events in 2026, the most effective strategy is not choosing one approach over the other. It is layering them intentionally.

How a Hybrid Approach Works

A practical hybrid model assigns human interpreters to the one or two primary non-English languages where your largest audience segments sit and where content nuance matters most. AI captioning then covers all remaining languages, providing broad multilingual access without proportional cost increases.

This approach also solves the accessibility gap that human interpretation alone cannot address. Simultaneous interpretation provides audio output, but it does not serve deaf or hard-of-hearing attendees. AI captioning provides visual text output, ensuring ADA and accessibility compliance regardless of language.

Example: A 2,000-Person International Conference

Consider a three-day corporate summit with attendees from 30 countries. The primary audience segments are English, Spanish, and Mandarin speakers, with smaller groups representing French, Portuguese, Japanese, Korean, Arabic, German, and several other languages.

A hybrid setup might look like this:

- Human simultaneous interpreters for Spanish and Mandarin (the two largest non-English groups)

- VerbalScribe AI captioning for all other languages, including English captions for accessibility

- Total interpretation booth requirement reduced from 10+ to 2

- All attendees access captions on personal devices

- Hybrid and remote attendees receive identical multilingual support

The multilingual event captioning cost for the AI component in this scenario represents a fraction of what full human coverage across all languages would require, while the audience experience remains comprehensive.

Logistical Complexity and Production Integration

Setup and Coordination for Human Interpreters

Managing a full interpretation team requires advance coordination that begins weeks or months before the event. You need to secure interpreters, arrange travel, set up booths, test audio feeds, distribute receivers, and brief interpreters on subject matter and terminology. On event day, a dedicated technician monitors the interpretation feeds, and any interpreter absence or technical failure can leave an entire language group without access.

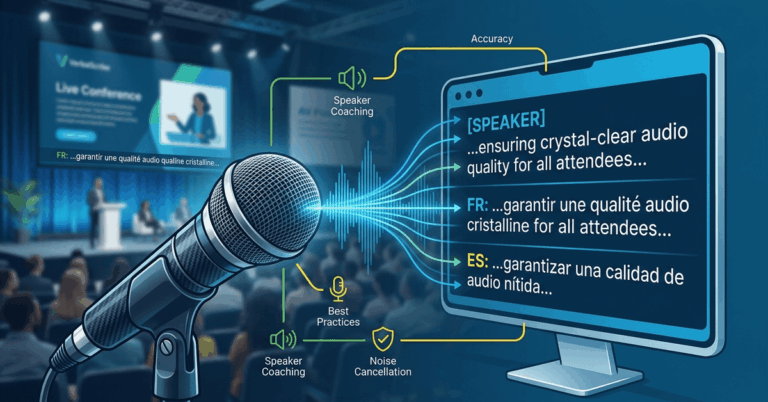

Setup for AI Captioning Platforms

Platforms built for live event production — like VerbalScribe — are designed to integrate into existing audio workflows. Setup typically involves routing a clean audio feed from the production console into the platform, configuring language outputs, and sharing an access link or QR code with attendees.

For production teams already working with tools like ProPresenter or Dante-networked audio, the integration is straightforward. There is no physical booth setup, no receiver logistics, and no interpreter staffing to manage on the day of the event.

Risk and Redundancy

Live events demand reliability. Human interpreters introduce single points of failure at the individual level — an interpreter who falls ill the morning of the event creates an immediate gap. Professional AI platforms mitigate this through infrastructure redundancy, automated failover, and consistent availability across all configured languages.

Neither approach is immune to failure. Network issues can affect AI platforms. Interpreter fatigue affects human teams. The difference is in how each model handles redundancy at scale.

Making the Right Decision for Your Event in 2026

Choosing between human interpreters, AI multilingual captioning, or a hybrid model depends on your specific event profile. There is no universal answer, but there are clear guidelines.

If your event involves two or three languages, high-stakes content, and a budget that supports full interpretation — human interpreters remain an excellent choice. If your event needs to cover five or more languages, serves a hybrid or virtual audience, or operates under tighter budget constraints, an AI captioning platform delivers multilingual access at a fraction of the traditional cost.

For most professional events in 2026, the hybrid model offers the strongest outcome. It preserves human quality where it matters most and extends multilingual access broadly through AI captioning — all while reducing the overall multilingual event captioning cost and logistical burden on your production team.

VerbalScribe is built for exactly this use case. It delivers real-time multilingual captions with enterprise-grade reliability, integrates into professional production workflows, and scales to dozens of languages without multiplying your budget or your setup complexity. If you are planning a multilingual event and want to see how AI captioning fits alongside your existing interpretation strategy, the VerbalScribe team can walk you through a production-ready setup tailored to your event.

Frequently Asked Questions

How much does multilingual event captioning cost compared to hiring interpreters?

AI-powered multilingual captioning platforms typically cost between $1,000 and $4,000 per event for 10 or more languages. A comparable human interpreter setup for 10 languages can range from $43,000 to $87,000 or more when factoring in interpreter teams, booth rentals, equipment, and travel. The per-language cost difference is significant, especially as you add more languages.

Can AI captioning replace human interpreters entirely?

For many event types — conferences, lectures, training sessions, and worship services — AI captioning provides sufficient accuracy and comprehension for multilingual audiences. However, for high-stakes content involving legal, diplomatic, or emotionally nuanced communication, human interpreters still offer superior contextual accuracy. A hybrid approach is often the most effective solution.

How many languages can an AI captioning platform support at one event?

Platforms like VerbalScribe can support 20 to 50 or more languages simultaneously at a single event. Each attendee selects their preferred language on their personal device. Adding languages does not require additional physical infrastructure or staffing.

Does AI captioning meet accessibility compliance requirements?

Yes. AI-generated captions provide real-time text output that serves deaf and hard-of-hearing attendees, supporting ADA compliance and accessibility mandates. This is an area where AI captioning actually exceeds traditional interpretation, which only provides audio output and does not address the needs of attendees with hearing disabilities.

What audio setup does VerbalScribe require for a live event?

VerbalScribe requires a clean audio feed from your production console. It integrates with standard professional AV setups, including ProPresenter and Dante-networked audio workflows. Setup involves routing the audio feed into the platform and configuring your language outputs — no interpretation booths, receivers, or additional on-site staffing required.

Is the hybrid model more expensive than using AI captioning alone?

Yes, because you are still hiring human interpreters for one or two primary languages. However, it is substantially less expensive than full human interpretation across all languages. The hybrid model lets you allocate your interpretation budget where human quality has the highest impact and use AI captioning for broader coverage, keeping overall multilingual event captioning cost manageable while maximizing audience reach.