Why Auto-Captions Fail at Live Events (And What WCAG Actually Requires for Accessibility Compliance)

If you have ever watched auto-generated captions mangle a speaker’s name, turn a technical term into gibberish, or lag so far behind the presenter that the audience gives up reading, you already understand the core problem. Auto-captions are convenient. They are also unreliable — and in the context of a live event, unreliable captions are not just a nuisance. They are a barrier to participation.

Live event captioning accuracy is not a minor production detail. It is the dividing line between accessibility that works and accessibility that exists only on paper. The Web Content Accessibility Guidelines (WCAG), published by the W3C, are explicit on this point: automatically generated captions do not meet accessibility requirements unless they have been verified as fully accurate. For event producers, corporate communications teams, and AV professionals responsible for high-stakes live programming, that distinction carries real consequences — legal, reputational, and ethical.

This post breaks down where auto-captions fall short, what the accessibility standards actually say, and what it takes to deliver captioning that meets both compliance thresholds and audience expectations at live events.

The Accuracy Gap Between Auto-Captions and Professional Captioning

Consumer-grade automatic speech recognition (ASR) has improved dramatically over the past decade. Platforms like YouTube, Zoom, and Google Meet offer built-in auto-captioning that performs reasonably well in controlled environments: a single speaker, clear audio, standard vocabulary, minimal background noise.

Live events are not controlled environments.

At a professional conference, a corporate town hall, a university commencement, or a large worship gathering, the audio conditions that auto-captions depend on rarely hold. Multiple speakers rotate. Accents vary. Technical terminology, proper nouns, organizational jargon, and industry-specific language appear constantly. Room acoustics introduce reverberation. Audio feeds pass through mixers, wireless systems, and signal chains that can introduce artifacts or latency.

Under these conditions, auto-caption accuracy drops significantly. Research and industry benchmarks consistently show the gap:

Captioning Method | Typical Accuracy Range | Common Failure Points |

|---|---|---|

Consumer auto-captions (Zoom, YouTube, Teams) | 70%–85% | Proper nouns, accents, overlapping speech, technical terms |

Enhanced ASR with custom vocabulary | 80%–90% | Complex sentences, rapid speech, audio artifacts |

Professional CART (Communication Access Realtime Translation) | 96%–99%+ | Cost, availability, single-language limitation |

AI-human hybrid captioning | 95%–99%+ | Requires platform with trained review layer |

At 80% accuracy, roughly one in every five words is wrong. In a 30-minute keynote containing approximately 4,500 words, that translates to around 900 errors. For a Deaf or hard-of-hearing attendee relying on those captions as their primary channel, the experience is not accessibility — it is frustration.

What WCAG Actually Requires for Live Event Captions

The Web Content Accessibility Guidelines are the globally recognized standard for digital accessibility, and they form the technical basis for most legal accessibility requirements in the United States, the European Union, Canada, and beyond. When organizations reference ADA compliance, Section 508, or the European Accessibility Act in the context of digital content, WCAG is the framework courts and regulators turn to.

WCAG addresses captions under Success Criterion 1.2.4 (Captions — Live), which is a Level AA requirement. Level AA is the standard most organizations are expected to meet, and it is the threshold referenced in the majority of legal and procurement contexts.

What the W3C Says About Auto-Captions

Here is where many event teams get tripped up. The W3C’s own supplementary guidance is clear: automatically generated captions alone do not satisfy WCAG requirements for live content unless those captions are confirmed to be fully accurate. The reasoning is straightforward. If captions contain significant errors, they do not provide equivalent access to the audio content. A caption track that misrepresents what a speaker is saying can actually be worse than no captions at all, because it introduces misinformation while creating the appearance of compliance.

This does not mean auto-captions are prohibited. It means they cannot be the entire solution. If an ASR engine produces output that is then reviewed, corrected, and verified in real time — either by a human operator or by a sufficiently accurate hybrid system — the result can meet the standard. The requirement is accuracy of output, not a specific method of production.

The Difference Between Having Captions and Having Accessible Captions

Many organizations check the captions box by enabling the built-in auto-caption feature on their streaming platform. That action provides captions. It does not necessarily provide accessible captions as defined by WCAG.

The distinction matters for several reasons:

- Legal exposure: Organizations subject to the ADA, Section 508, or equivalent regulations can face complaints and litigation when their captioning fails to provide meaningful access.

- Procurement requirements: Universities, government agencies, and large corporations increasingly include WCAG AA compliance in RFPs and vendor requirements for event technology.

- Audience trust: Attendees who depend on captions — not just Deaf and hard-of-hearing individuals, but also non-native speakers, people in noisy environments, and those with auditory processing differences — notice when captions are inaccurate. Poor captions signal that their participation was an afterthought.

Why Auto-Captions Fail Specifically at Live Events

Understanding the specific failure modes helps event teams make informed decisions about their captioning approach. Auto-caption errors at live events are not random. They cluster around predictable problem areas.

Proper Nouns and Specialized Vocabulary

Every live event has its own vocabulary. A medical conference uses clinical terminology. A corporate event references product names, internal acronyms, and executive names. A university commencement includes faculty titles, department names, and honoree biographies. A house of worship uses theological terms, scripture references, and liturgical language.

Consumer ASR engines are trained on general-purpose language models. They have no awareness of your event’s specific lexicon. The result is systematic misrecognition of the words that matter most to your audience.

Multiple Speakers and Accents

Live events rarely feature a single speaker with broadcast-standard diction. Panels, Q&A sessions, multilingual presenters, and audience participation all introduce variability that degrades auto-caption performance. Accented English, code-switching between languages, and rapid conversational exchanges are particularly challenging for unsupervised ASR.

Audio Signal Quality

Auto-caption engines process whatever audio signal they receive. In a live production environment, that signal may pass through wireless microphone systems, mixing consoles, Dante networks, and streaming encoders before reaching the captioning layer. Each stage can introduce latency, compression artifacts, or signal degradation. A feed that sounds fine to a human listener may contain characteristics that confuse an ASR model.

Latency and Synchronization

Even when auto-captions are reasonably accurate, latency can undermine their usefulness. If captions arrive five to ten seconds behind the spoken word, attendees lose the connection between what they see on screen and what is happening on stage. For live events with visual presentations, audience laughter, or call-and-response moments, synchronization matters.

The 99% Accuracy Standard and Why It Matters

The captioning industry has long referenced a 99% accuracy rate as the benchmark for professional real-time captioning. This is not an arbitrary number. It reflects the threshold at which captions reliably convey meaning without requiring the reader to guess, re-read, or mentally correct errors.

Consider the practical difference:

Accuracy Rate | Errors per 1,000 Words | Impact on Comprehension |

|---|---|---|

99% | 10 | Minor, generally non-disruptive |

95% | 50 | Noticeable, occasional confusion |

90% | 100 | Significant meaning loss in complex content |

80% | 200 | Unreliable for primary communication access |

At 99% accuracy, the occasional error is an inconvenience. At 80% accuracy, the captions fail their fundamental purpose. For attendees who rely on captions as their primary or sole means of accessing spoken content, the difference between these tiers is the difference between inclusion and exclusion.

Professional CART providers have historically been the gold standard for achieving 99%+ accuracy. However, CART services face practical limitations for many event teams: high cost, limited availability (especially for multilingual events), scheduling constraints, and the challenge of scaling across multiple sessions or breakout rooms.

How AI-Human Hybrid Captioning Bridges the Gap

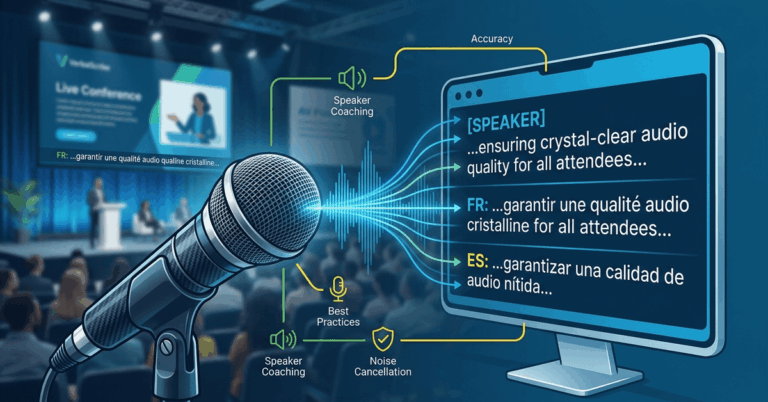

The most effective modern approach to live event captioning accuracy combines the speed and scalability of AI with the contextual judgment of human oversight. This hybrid model addresses the specific failure modes of pure auto-captioning while remaining more accessible and scalable than traditional CART.

How Hybrid Captioning Works

In a hybrid model, an advanced ASR engine generates the initial caption stream in real time. That stream is then monitored, corrected, and refined — either by a human editor working in real time or by a secondary AI layer trained to catch and correct the most common error patterns specific to live event content.

The most effective hybrid systems also support:

- Custom vocabulary loading before the event (speaker names, technical terms, organizational language)

- Real-time correction interfaces for operators

- Multilingual output from a single audio source

- Integration with professional AV workflows and display systems

Why Hybrid Outperforms Pure Auto-Captions in Live Settings

The hybrid approach directly addresses the failure points outlined above. Proper nouns and specialized terms can be pre-loaded and prioritized. Speaker variation is handled by the human or advanced AI correction layer. Audio signal issues are mitigated by the system’s ability to apply contextual inference rather than relying solely on acoustic pattern matching.

For event producers, the hybrid model also offers a practical advantage: it can scale across multiple sessions, support multiple output languages simultaneously, and integrate with existing production infrastructure — capabilities that traditional single-operator CART cannot easily match.

What Event Producers Should Evaluate When Choosing a Captioning Solution

Selecting a captioning approach for a professional live event is a production decision with accessibility, legal, and audience experience implications. The following criteria help event teams make that evaluation with clarity.

Accuracy Under Real Conditions

Ask vendors about accuracy rates specifically in live event scenarios — not in quiet, single-speaker lab conditions. Request references from comparable events. Accuracy claims based on pre-recorded content are not meaningful for live applications.

Latency and Synchronization

Captions that arrive more than a few seconds behind the speaker lose their value in a live context. Evaluate end-to-end latency from microphone to displayed caption, including any network, encoding, and processing time.

Multilingual Capability

If your event serves a multilingual audience, captioning and translation should operate from the same platform and the same audio source. Managing separate vendors for captioning and translation introduces complexity, cost, and additional points of failure.

Integration With Your Production Workflow

Captioning should not require your AV team to build a separate technical infrastructure. Look for solutions that integrate with your existing audio routing, display systems (including tools like ProPresenter), and streaming platforms without adding fragile dependencies.

Compliance Documentation

For organizations subject to ADA, Section 508, or equivalent regulations, the ability to document your captioning accuracy and approach is important. Your captioning provider should be able to articulate how their solution meets WCAG AA requirements — not just claim compliance in a marketing bullet point.

Live event captioning accuracy is ultimately about whether your attendees can trust the words on screen. Auto-captions offer a starting point, but for professional events where accuracy, reliability, and accessibility compliance matter, they are not sufficient on their own. The WCAG standard is clear, and audience expectations are rising.

VerbalScribe is built for exactly this challenge. As a platform designed specifically for live event environments, VerbalScribe delivers real-time multilingual captioning with the accuracy, stability, and professional integration that production teams require. Whether you are producing a single conference or managing accessibility across a full event season, VerbalScribe provides enterprise-grade captioning that meets both compliance standards and the expectations of every attendee in the room. If your current captioning approach relies on unverified auto-captions, it may be time to evaluate whether your events are truly accessible — or just technically captioned.

Frequently Asked Questions

Do auto-captions meet WCAG accessibility requirements for live events?

Not by default. The W3C states that automatically generated captions do not meet WCAG requirements unless they are confirmed to be fully accurate. For live events, this means auto-captions alone typically fall short of the Level AA standard (Success Criterion 1.2.4) that most legal and regulatory frameworks reference. Captions must provide accurate, equivalent access to spoken content to satisfy the requirement.

What accuracy rate is considered acceptable for live event captions?

The professional captioning industry uses 99% accuracy as the benchmark for effective real-time captioning. At this level, errors are infrequent enough that they do not disrupt comprehension. Below 95%, caption errors become noticeable and can interfere with meaning. Below 90%, captions are generally considered unreliable as a primary communication channel.

What is AI-human hybrid captioning?

AI-human hybrid captioning uses an advanced automatic speech recognition engine to generate captions in real time, combined with a human editor or secondary AI correction layer that monitors and corrects errors as they occur. This approach combines the speed and scalability of AI with the contextual accuracy that live events demand, particularly for proper nouns, technical terms, and complex speech patterns.

Are organizations legally required to provide captions at live events?

Legal requirements vary by jurisdiction and organization type. In the United States, the ADA requires effective communication for individuals with disabilities, which courts have increasingly interpreted to include accurate captioning for live and streamed events. Section 508 applies to federal agencies and their contractors. The European Accessibility Act introduces similar requirements across EU member states. Universities, government agencies, and publicly funded organizations face the most explicit mandates, but any organization hosting public-facing events should evaluate their obligations.

How does live event captioning accuracy differ from pre-recorded captioning accuracy?

Pre-recorded content can be captioned with multiple review passes, manual editing, and unlimited time for corrections, making near-perfect accuracy straightforward to achieve. Live captioning must process speech in real time with minimal latency, leaving no opportunity for post-production review before the audience sees the output. This makes live captioning inherently more challenging and places a higher premium on the quality of the captioning system and any human oversight involved.

Can live event captions be delivered in multiple languages simultaneously?

Yes, with the right platform. Systems designed for live event environments can process a single audio source and generate caption output in multiple languages simultaneously using real-time translation. This allows multilingual audiences to follow the same presentation in their preferred language without requiring separate interpreters or audio channels for each language. VerbalScribe supports real-time multilingual captioning from a single audio feed as part of its core platform.